Such, SUCH a great treat having Donovan come all the way from Houston for this presentation. He’s a fantastic presenter and his hands-on knowledge blows my socks off. I can’t thank him enough for making the trip.

IF YOU ATTENDED and you enjoyed the presentation – or even if you didn’t enjoy it – WE NEED YOUR HELP!

Take a few minutes and click on this link – it will tell us how we can improve next time, and hopefully lay the groundwork for Donovan to come back in a year or so.

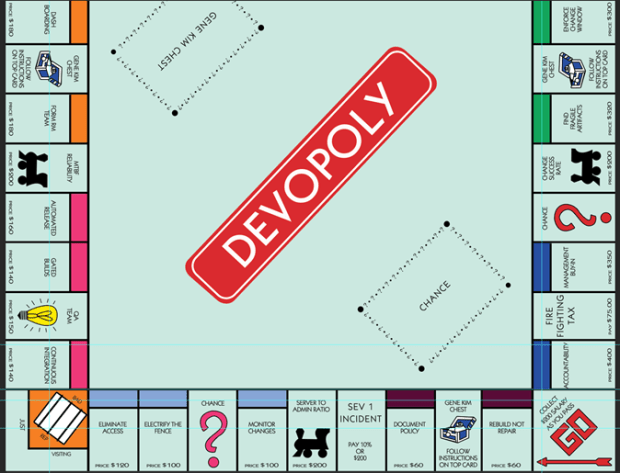

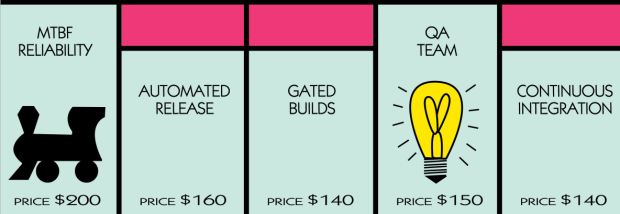

There’s a streaming video available here of Donovan’s Portland Area .NET User Group (PADNUG) presentation. It was outstanding! If you want to see one CI/CD release pipeline built out releasing a website to Azure in one hour – well sorry, that is not what he did. Instead, Donovan built out FOUR pipelines in an hour – using Node.js, Java, .Net and .Net Core., while describing step by step what he was doing and why – using VSTS, the Azure Portal, Visual Studio, and a cool command-line tool I hadn’t heard about called Yo Team!

The key takeaways from his presentation are as follows:

- If you only get one thing from our time together – Microsoft is for any language, any platform.

- Licensing costs should not be a blocker for us. It’s like $10/person per month, and that’s only for large teams over 5. And with MSDN you get these benefits included.

- Lastly, if you attended and enjoyed the presentation – give a shout out to Donovan on Twitter. He’s thrown down the gauntlet – “we have the best build and release tools on the market, if you don’t agree reach out to me on Twitter and be specific!” So, if you don’t think Microsoft’s build tools will work with Jenkins, or Java apps, Node.js, SonarQube etc – throw down and enter the squared circle my man!

My notes are below. These aren’t notes of his presentation as they were no-slide demos, but comments Donovan made during the day that gives DevOps context to his presentation. As the saying goes, “If I had more time it would have been shorter!”

Thoughts on Strategy

Make incremental changes in ramping up your maturity. It’s safer. If you are deploying manually, there’s one part that you fear the most. Focus on that.

“If it hurts, do it more often.” (You are incurring more risk by deploying less frequently.) Lean forward into that and think about, how can I fix this. Focus on scariest part first.

If you need prescriptive – hire a consultant. (Like, I don’t know, Microsoft Premier for Developers maybe!) They can ask questions, pinpoint pain points for your org and provide a specific roadmap.

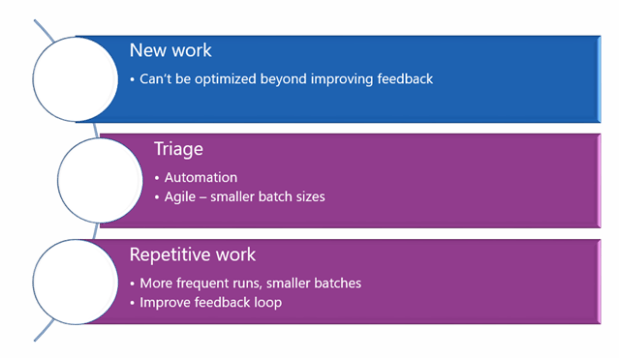

Why do you have to ask permission from your manager? Your job as an engineer is to continuously deliver value. You should need to ask permission if you DON’T want to set up CI. No need to stop and learn for months – focus on what hurts the most, and focus on that one thing. What is the thing – every time you run a deployment – why it breaks. Otherwise – you end up paying a tax doing it manually. Find something small to focus on – don’t try to do it all at once. It might take you months – after which you may have an amazing portion of your pipeline that was manual that is now automated. That will get people excited. And after that last build that blows up when people start playing the “Not it” game – guess what, you get to go home early!

And if you’re stuck on exactly where do we start? – http://donovanbrown.com/post/How-do-we-get-started-with-DevOps (really, check this post out. It’s good enough to mention twice!)

- DevOps Interviews – Interview with Aaron Bjork (feature flags is excellent) – all the interviews are here: https://channel9.msdn.com/blogs/devops-interviews. The one on feature flags as used by VS product teams – https://channel9.msdn.com/Blogs/DevOps-Interviews/Interview-with-Aaron-Bjork-Consistency (This answers that age old question “How did you guys do it?”)

- Where do we start? – http://donovanbrown.com/post/How-do-we-get-started-with-DevOps – What is our starting point? Find the part of the deployment you currently have today that hurts the most and focus on that pain point.

Agile Is Your Starting Place

The key question is – “Can you produce releasable bits of code?” Until your Agile processes are strong – not perfect but mature – you can’t see significant gains with DevOps. The business needs to understand that we need to produce increments of shippable code. DevOps is the “second decade of Agile.” Once Agile is in place you will realize that you’re not able to ship as fast as you can produce features.

What limits us so far – four years after “The Phoenix Project?” Really it’s still slowed by adoption of Agile. 40% of orgs have adopted Aguke. That’s a dead number, as its only dev teams that are championing this. The rest of the org is expecting waterfall, requirements, dates.

DBA’s are frequent blocking point. If you slice vertically and not horizontally. I’ll give you 3 months, if you promise not to make any further changes to the schema, ever. “That’s crazy!” “If you’re going to change it anyway why should I invest 3 months in this now. Your first step could start with login functionality – in 3 weeks we have a login page, username and credentials, authentication and identification.” Don’t let a recalcitrant DBA stop you in your tracks.

One week sprints really worked well (at one company) for Donovan in his consulting past- with product owner/business owner. After 8 weeks, needed to get a knowledgeable person (replacing old business owner) – and a sigh of relief, finally back on track. And hire a scrum master. That person needs to be able to say no to the boss. For a good product owner, all they have to do is understand the business they are trying to serve – and tell me of two backlog items which one is a priority. Scrum Masters must protect team from unrealistic expectations, on this date, I want these features – perfect quality. Quality is not negotiable – either move date or lose features.

Database Goodies

SQL Server Data Tools (SSDT) – version every object on SQL Server including permissions and roles. Stored in a database project. (this is free. Evaluate, see if it meets your need.) An amazing tool, been around for 10 years, very little known – our best kept secret! If SSDT for some reason isn’t scaling or working for you – ReadyRoll from RedGate is also a good option.

- SSDT videos here – https://channel9.msdn.com/Shows/Visual-Studio-Toolbox –

- Database DevOps with Redgate – https://channel9.msdn.com/Shows/Visual-Studio-Toolbox/Database-DevOps-with-Redgate-Data-Tools

- Database Unit Testing – https://channel9.msdn.com/Shows/Visual-Studio-Toolbox/SQL-Server-Database-Unit-Testing-in-your-DevOps-pipeline

- SSDT for Visual Studio – https://channel9.msdn.com/Shows/Visual-Studio-Toolbox/SQL-Server-Data-Tools-for-Visual-Studio?term=ssdt%20dmitry

- Using SSDT for your DevOps Pipeline: https://channel9.msdn.com/Shows/Visual-Studio-Toolbox/SQL-Server-Data-Tools-in-your-DevOps-pipeline?term=ssdt%20dmitry

Last but not least – this amazing site brought to my attention from Jorge Segarra. An awesome resource for all things DevOps for you DBA guys! https://www.microsoft.com/en-us/sql-server/developer-get-started/sql-devops/

This may require breaking up into diff schemas (like a virtual namespace) vs one huge project. Schemas are here now and available; will allow you to segment databases that you create – no 3000 tables/etc in one untranslatable blob. (BACPAC is schema and data, DACPAC is just schema. Can run post/pre scripts- make sure its idempotent so if it runs twice you don’t double your db size!)

Migrating your CRUD statements from sprocs to Entity Framework may be a win to move away from thousands of unwieldy and clunky INS/UPD/Del type statements.

Integration Testing (Selenium etc)

DevOps – one company said “DevOps = Automation”. So they had thousands unit and integration tests. Code would flow with no human intervention from commit to production. As soon as they release a CSS change though to prod, they start losing money. As soon as they started looking at ecommerce website – summary page made final buttons and the text the same color- this resulted in a blank button! Automation clicked it because the ID of the button did not change. So, automate everything you CAN – don’t try to automate things you shouldn’t.

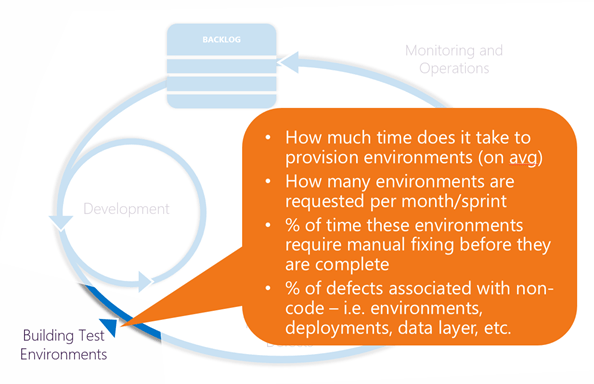

Best practices for building integration tests: the #1 thing is making sure assumptions you made when you recorded your automation are still valid. Usually the database in QA is the failing point: the code isn’t broken but the data it runs against doesn’t fit assumptions. See Redgate’s excellent tool SqlClone here.) They are a few versions behind with their Selenium driver, using IE.

“We spend more and more time with peer testing than we do with auto testing on the Microsoft VS team. As we’re our own first customer, there’s a lot of A/B testing that happens. Integration testing happens very little, unless there’s a performance issue – then we move it to GoBig environment where we can perf test.” Per Brian Harry – “Unapologetically we do testing in production.”

Donovan ran a team in Akron where they were not allowed to write a line of code until they wrote a UI test (in CodedUI, but now we do this in Selenium). They wrote automation before they wrote code – wrote so it fails, then refine until it passes. This and a manual test case – which can be given to a stakeholder in plain English (“oh, I didn’t expect I should be pressing that button”) – there’s nothing better than a manual test case to be your new acceptance criteria. Automation and UI tests are brittle – they become brittle/break, that didn’t go away, but having it there forced my teams to do the right thing early. (These were one week sprints, Weds to Weds.) Do it more often if it hurts! Finding clever ways of making BACPAC/data layer work.

VS teams – forced them to move more functionality to behind the scenes, UI testing. Make unit testing viable. To those nerd architects out there claiming that “Unit tests are worthless”! – we run 41000 unit tests in VSTS and it takes 6 minutes. You can’t run integration tests that fast.

Infrastructure as Code (ARM templates) and Configuration Management (PowerShell DSC)

ARM (Azure Resource Manager) is the name of the game here to get Infrastructure as Code working. This is a much better process than spinning up resources manually for example. (Not configuration here – doesn’t include IIS, etc. )

Recommendation: Introduce your Infra team to ARM templates. ARM templates are Azure only but you can use Terraform to point to Azure/multi-cloud solution so you can move resources to Google/AWS etc.

Configuration as Code – using PowerShell DSC (Desired State Configuration) – take configuration of that server and you codify it as well. (I demand you have port 80 open, .NET 4.5, IIS available, these security roles etc.) Server installs everything you need to bring it to ready state. Every 15 minutes – checks to make sure it fits standard config. So, if it finds that IIS is turned off, it will detect if the configuration is drifting and make a note in the log. You can also configure it to bring it back to standard state.

PowerShell DSC works for both Linux and Windows environments. And, it’s free! (p.s. Chef uses DSC as well so it’s a layer on top of a layer.)

Recommendation: Start with what you have and what’s free with PowerShell DSC and THEN make an evaluation if there’s features that are missing. This could take <1 hour. From Donovan – “I encourage you to ping me on Twitter if DSC is not getting the job done. If we can’t fix it fast enough, then go to Chef or a competitor.”

DSC can be done onprem/etc. It’s idempotent, which means you can run it blindly a million times in a row and the server will stay exactly the way it needs to.

- PowerShell with ARM template overview – https://docs.microsoft.com/en-us/azure/azure-resource-manager/resource-group-template-deploy

- Ops oriented Microsoft Virtual Academy course – https://mva.microsoft.com/learning-path/powershell-beginner-12

- Channel 9 video – Getting Started with PowerShell DSC – https://channel9.msdn.com/Series/Getting-Started-with-PowerShell-Desired-State-Configuration-DSC

- Advanced PowerShell with PowerShell DSC – https://channel9.msdn.com/Series/Advanced-PowerShell-Desired-State-Configuration-DSC-and-Custom-Resources

-

Steve Murawski site with DSC links: https://powershell.org/author/stevenmurawski/

- Note his book on “DevOps: The Ops Perspective”, here at this central link – there’s both gitHub and – https://powershell.org/ebooks/ – and https://www.gitbook.com/@devops-collective-inc?page=1

- I also liked this 30-day journey on DSC that one Ops person blogged. Lots of great learning stories and references here. http://donovanbrown.com/post/PowerShell-Desired-State-Configuration-(DSC)-Journey-by-Jacob-Benson

Self Service VM’s with Dev/Test Labs

Struggling with self service VM’s and exposing costs of resources to the consumers you service? (I.E. that 5 9’s coming at a horrendous price that is shielded from end users/business?) Think about Dev/Test Lab. This is Self service – no blank check, you can spin up a sandbox (using allocated $ your customers buy each month) and enforces the VM images they are allowed to spin up.

Dev/Test Lab documentation – https://docs.microsoft.com/en-us/azure/devtest-lab/

Overview and trial site hub – https://azure.microsoft.com/en-us/services/devtest-lab/

Starting documentation, very good – https://docs.microsoft.com/en-us/azure/devtest-lab/devtest-lab-overview

Microservices

Of advantage because releases now are micro sizes. No more having to track down 6 months back with a dictionary of changes. Would eliminate webmethods – ESB. Can rev very quickly on microservices without jeopardizing the application as its very small, atomic pieces of functionality.

Meant to be combined with containers.

Not a silver bullet. Evaluate it. Microsoft is going there on VSTS team. Docker support is built into VSTS – can publish to a registry. Even TFS 2015 update 3-4 has Docker support as well. Need to also run Win Server 2016 to support containers, or Linux containers. Anniversary edition of Windows 10.

- Exploring Microservices with Visual Studio and Docker: https://mva.microsoft.com/en-US/training-courses/11796?l=TATeapmEB_7704984382

- Getting Started with .NET and Docker – https://channel9.msdn.com/events/Connect/2016/172?term=docker

- Great book on Microservice architecture and design – free eBook – https://www.microsoft.com/net/download/thank-you/microservices-architecture-ebook (notice there’s a link here to a reference application you can pull down)