Recently faced an issue where a client had a memory allocation issue on one of their servers. I’m not going to deep dive into any of these, but here were some of the tools I used in tracking down the culprit:

- Go through Eventvwr and look at any error messages. There’s a list of error codes on MSDN.

- Get a process dump (full please!) using procdump. Configure with –ma –x to capture a dump on failure.

- In Windbg, open the crash dump and use !analyze –v. There’s an extensive set of help files on windbg.

- DebugDiag for crash analysis, slow performance, memory leak analysis, and performance analysis.

- ApplicationVerifier (appverif.exe) – for subtle programming errors. (i.e. heap corruption, incorrect handles). Since this must be run client-side, more of a dev than a production tool. This doesn’t require a process dump.

- Perhaps look at a self dump generation in code.

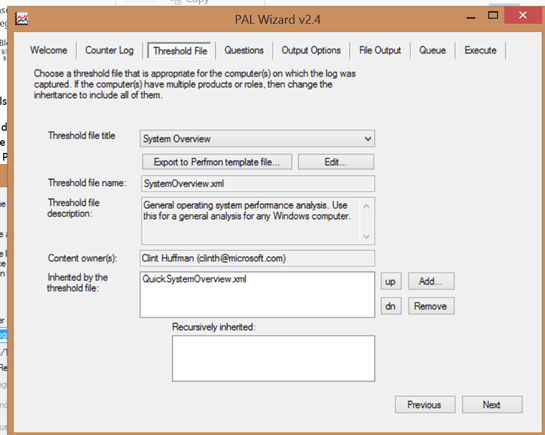

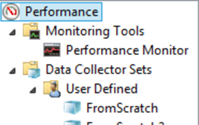

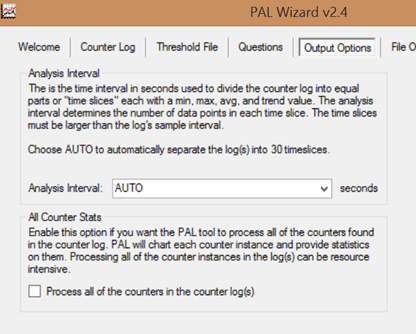

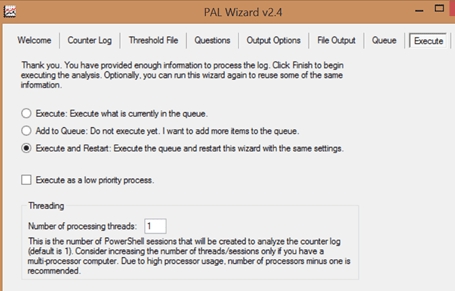

- Perfmon/PAL to look at memory leaks. This is for growing heaps (where memory is allocated but not deallocated), handle leaks (handles are created but not freed), and rising thread count. Memory allocation issues are very troublesome to catch and there’s a ton of third party tools out there to help – think RationalPurifier or Insure++.

In a little more detail:

-

For a crash:

- Windbg debugger is xcopy deployable.

- DebugDiag runs as a service, and monitors the process – if it crashes, it creates a dump.

- Adplus – this is a command line tool you can set with –crash –p{processID}

-

For a hung process:

- Task Manager (right mouse click to create a crash dump)

- DebugDiag (process tab, rt-mouse click, create full user dump)

- Adplus –hang –p{pid}

-

For a memory leak:

- CLR memory profiler

- DebugDiag (our Swiss army knife!) – leak track, rule and user dump

- Umdh (old school – command line)

- For .NET, there’s sos.dll and psscor4.dll – these are debugger extensions to analyze .NET dump stacks.