I’m not even pretending that this is definitive or comprehensive. But, at 11 pm, here’s a few notes and some helpful links and resources as a companion to a presentation I wrote earlier today.

Migrating Workflows

If you’re an AWS developer and you are thinking of exploring your options in Azure-world – here are some things to keep in mind:

- You’ve already hit the big challenges in moving to the Cloud, It’s much easier to move workloads from AWS to Azure, than from onprem to the cloud.

- The majority of AWS functionality has a map in Azure. My take is – AWS started with a 3 year head start in the IAAS space, and that’s their strong point. Azure has a much stronger backbone and pedigree to where the cloud really gets interesting – PAAS/SAAS scenarios. The feature competition between Amazon, Microsoft and Google is a going to continue accelerating – which is a very good thing for you.

- VM conversion from EC2 is easy; PAAS/SAAS conversion is tougher. None of these are truly apples-to-apples (example, AWS Lambda -> Azure Functions)

- Availability models are very different

- Project specific – know the integration points and SLAs and underlying platform services.

- Deployment models are better in Azure!

Migration is remarkably easy – basically you follow some simple steps using Azure Site Recovery.

- Azure Site Recovery documentation: https://azure.microsoft.com/en-us/blog/furthering-cloud-hyper-scale-and-expanding-our-hybrid-cloud/

- Great article here – https://azure.microsoft.com/en-us/blog/seamlessly-migrate-your-application-from-aws-to-azure-in-4-simple-steps/

Amazon AWS to Azure – General Resources

TechNet Radio Series from Microsoft:

- Part 1: Getting Started (note I’m not too crazy about this one)

- Part 2: Networking

- Part 3: Storage

- Part 4: Virtual Machines, VM Scale Sets and Containers

- Part 5: Migrating to Azure

Great Pluralsight video – I loved this. An excellent starting point for people new to PAAS architecture.

Architecture Overview

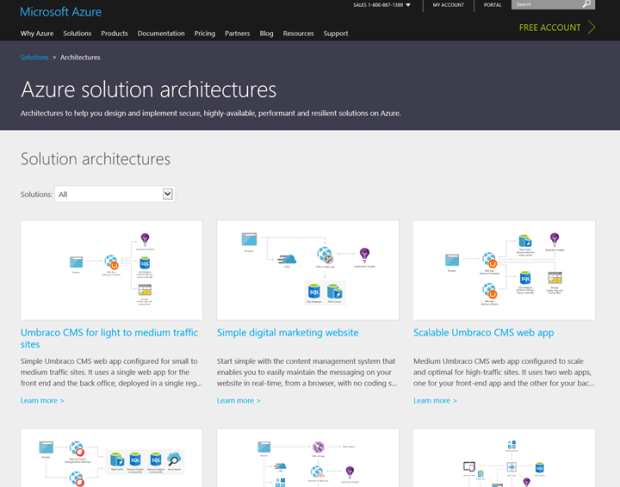

Wonderful set of reference architectures – this is a terrific link: https://azure.microsoft.com/en-us/solutions/architecture/

(see below for snapshot)

And a central repository for more whitepapers: https://docs.microsoft.com/en-us/azure/architecture-overview

Another outstanding book – free – on Cloud Design Patterns. This is a terrific book and it has some outstanding reference works that can pair with this:

Web Development Best Practices Poster (and see the link above for a Scalability poster as well)

(screenshot below)

Now let’s talk a little more about how some of these components in the AWS space map over more into the Azure space.

Azure Functions

- Azure Functions – this is from March 2016, when it was brand new: https://azure.microsoft.com/en-us/blog/introducing-azure-functions/

- Excellent overview of deployment strategies with Azure Functions – https://blog.kloud.com.au/2016/09/04/azure-functions-deployment-strategies/ (3 models explored including msdeploy and my favorite, Git)

- This article explores the differences in deployment between Azure Functions and AWS: https://blog.kloud.com.au/2016/07/02/code-management-in-serverless-computing-aws-lambda-and-azure-functions/

- Very good Scott Hanselman article: http://www.hanselman.com/blog/WhatIsServerlessComputingExploringAzureFunctions.aspx

- https://docs.microsoft.com/en-us/azure/azure-functions/functions-overview

- https://docs.microsoft.com/en-us/azure/azure-functions/functions-create-first-azure-function

Blob Storage

Blob Storage – very good walkthrough: https://docs.microsoft.com/en-us/azure/storage/storage-dotnet-how-to-use-blobs

- And a better one – more of a dev walkthrough on creating/accessing blob storage https://docs.microsoft.com/en-us/azure/storage/storage-dotnet-how-to-use-blobs

- Microsoft Azure Storage Explorer (MASE) is a free, standalone app from Microsoft that enables you to work visually with Azure Storage data on Windows, OS X, and Linux.

- Getting Started with Azure Blob Storage in .NET

- Transfer data with the AzCopy command-line utility

- Get started with File storage for .NET

- How to use Azure blob storage with the WebJobs SDK

And more general notes on storage models in Azure:

- Azure Storage – https://docs.microsoft.com/en-us/azure/storage/storage-create-storage-account

- Azure Storage Videos – https://azure.microsoft.com/en-us/resources/videos/index/?services=storage

- https://azure.microsoft.com/en-us/resources/videos/get-started-with-azure-storage/

Event Hubs

- Documentation hub – https://docs.microsoft.com/en-us/azure/event-hubs/

- https://azure.microsoft.com/en-us/resources/videos/index/?services=event-hubs

- https://azure.microsoft.com/en-us/resources/videos/build-2015-best-practices-for-creating-iot-solutions-with-azure/

Azure Stream Analytics

- 12 minute intro video with Scott H: https://azure.microsoft.com/en-us/resources/videos/introduction-to-azure-stream-analytics-with-santosh-balasubramanian/

- 2 minute Stream Analytics overview – a little marketing oriented. https://azure.microsoft.com/en-us/resources/videos/azure-stream-analytics-overview/

- Walkthrough doc – https://docs.microsoft.com/en-us/azure/Stream-Analytics/stream-analytics-build-an-iot-solution-using-stream-analytics

Redis

- Creating a web app – https://docs.microsoft.com/en-us/azure/redis-cache/cache-web-app-howto

- Azure Redis Cache 101 – https://azure.microsoft.com/en-us/resources/videos/azure-redis-cache-101-introduction-to-redis/

- Application Patterns – https://azure.microsoft.com/en-us/resources/videos/azure-redis-cache-102-application-patterns/

DocumentDB

- https://docs.microsoft.com/en-us/azure/documentdb/documentdb-resources

- DocumentDB videos – https://azure.microsoft.com/en-us/resources/videos/index/?services=documentdb

- 13 minute DocumentDB video on partitioning: https://azure.microsoft.com/en-us/resources/videos/predictable-performance-with-documentdb/

- What is DocumentDB? 2 minute overview video – https://azure.microsoft.com/en-us/resources/videos/what-is-azure-documentdb/

- Using DocumentDb on Linux with your NodeJs app – 13 minute video – https://azure.microsoft.com/en-us/resources/videos/azure-demo-getting-started-with-azure-documentdb-on-nodejs-in-linux/

API Management

- https://docs.microsoft.com/en-us/azure/api-management/api-management-howto-create-apis

- https://azure.microsoft.com/en-us/resources/videos/api-management-in-under-5-minutes/

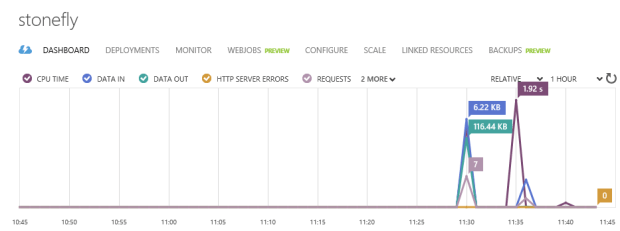

WebJobs

- Walkthru of sample: https://docs.microsoft.com/en-us/azure/app-service-web/web-sites-create-web-jobs

- Central repository of links: https://docs.microsoft.com/en-us/azure/app-service-web/websites-webjobs-resources

- Scott H overview – older but I like the “hotel” metaphor: http://www.hanselman.com/blog/IntroducingWindowsAzureWebJobs.aspx

- This outside blogger loved Webjobs and listed 10 reasons why, including scaleout (parallelism) and GitHub deployment. I loved it – https://www.troyhunt.com/azure-webjobs-are-awesome-and-you/

ARM Templates

- Overview – https://docs.microsoft.com/en-us/azure/azure-resource-manager/resource-group-overview – notice the 4 simple guidelines for best practice/maintainability

- ARM template best practices – https://docs.microsoft.com/en-us/azure/azure-resource-manager/resource-manager-template-best-practices

- Quickstart templates you can use for a starting point – https://azure.microsoft.com/en-us/resources/templates/

- Very good overview of ARM constructs from an outside-MSFT point of view: http://rickrainey.com/2016/01/19/an-introduction-to-the-azure-resource-manager-arm/

- ARM DevOps Jump Start: (40 minute video) – https://mva.microsoft.com/en-us/training-courses/azure-resource-manager-devops-jump-start-8413?l=4oafp4Jz_8504984382