John Weers is Senior Manager of DevOps and Software Quality at Micron. He works to build highly capable teams that trust each other, build high quality software, deliver value with each sprint and realize there’s more to life than work.

Note – these and other interviews and case studies will form the backbone of our upcoming book “Achieving DevOps” from Apress, due out in mid 2019 and available now for pre-order!

Kickstarting a DevOps Culture

Some initial background – I lead on a team of passionate DevOps engineers/managers who are tasked with making our DevOps transformation work. While our group is only officially about 5 months old, we’ve all been working this separately for quite a while.

About every two weeks we have a group of about 15 DevOps experts that get together and talk – we call them the “design team”. That’s a critical touch point for us – we identify some problems in the organization, talk about what might be the best practice for them, and then use that as a base in making recommendations. So that’s how we set up a common direction and coordinate; but we each speak for and report to a different piece of the org. That’s a very good thing – I’d be worried if we were a separate group of architects, because then we’d get tuned out as “those DevOps guys”. It’s a different thing altogether if a recommendation is coming from someone working for the same person you do!

We’ve made huge strides when it comes to being more of a learning-type organization – which means, are we risk-friendly, do we favor experimentation? When there’s a problem, we’re starting to focus less on root cause and ‘how do we prevent this disaster from happening again’ – and more on, what did we learn from this? I see teams out there trying new things, experimenting with a new tool for automation – and senior management has responded favorably.

Our movement didn’t kick off with a bang. About 5 years ago, we came to the realization that our quality in my area of IT was poor. We knew quality was important, but didn’t understand how to improve it. Some of the software we were deploying was overly complex and buggy. In another area, the issue wasn’t quality but time – the manual test cycle was too long, we’re talking weeks for any release.

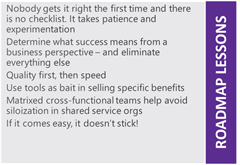

You can tell we’re making progress by listening to people’s conversations – it’s no longer about testing dates or coverage percentages or how many bugs we found this month, but “how soon can we get this into production?” – most of the fear is gone of a buggy release as we’ve moved up that quality curve. But it has been a gradual thing. I talked to everyone I could think of at conferences, about their experiences with DevOps. It took a lot of trial and error to find out what works with our organization. No one that I know of has hit on the magical formula right off the bat; it takes patience and a lot of experimentation.

Start With Testing

Our first effort was to target testing – automated testing, in our case using HP’s UFT and Quality Center platform. But there never was an all-hands-on-deck, call to “Do DevOps!” – that did happen, but it came two years later. We had to lay down the groundwork by focusing first on quality, specifically testing.

We’re five years along now and we are making progress, but don’t kid yourself that growth or a change in mindset happens overnight. Just the phrase “Shift Left” for example – we did shift our quality work earlier in the development process by moving to unit testing and away from UI/Regression testing. We found that it decreased our bugs in production by a very significant amount.

We went through a few phases – one where we had a small army of contractors doing test automation and regression testing against the UI layer. Quality didn’t improve, because of the he-said/she-said type interactions between the developers and QA teams in their different siloes. We tried to address interactions between different applications and systems with integration testing, and again found little value. The software was just too complex. Then we reached a point where we realized the whole dynamic needed to be rethought.

So, we broke up the QA org in its entirety, and assigned QA testers on each of our agile teams and said – you guys will sink or swim as a team. Our success with regression testing went up dramatically, once we could write tests along with the software as it was being developed. Once a team is accountable for their quality, they find a way of making it happen.

We got resistance and kickback from the developers, which was a little surprising. There was a lot of complaint when we first started requiring developers to write unit tests along with their code of it not being “value added” type activity. But we knew this was something that was necessary – without unit tests, by the time we knew there was a problem in integration or functional testing, it would often be too late to fix it in time before it went out the door.

So, we held the line and now those teams that have a comprehensive unit testing suite are seeing very few errors being released to production. At this point, those teams won’t give up unit testing because it’s so valuable to them.

“Shift Left” doesn’t mean throwing out all your integration and regression testing. You still need to do a little testing to make sure the user experience isn’t broken. “Shift Left” means test earlier in the process, but in my mind it also means that “our team” owns our quality.

Culture and Energy are the Limiting Points

If you want to “Do DevOps” as a solo individual, you’ll fail. You need other experts around you to share the load and provide ideas and help. A group is stronger than any individual.

Can I say – the tool is not the problem, ever? It’s always culture and energy. What I seem to find is, we can make progress in any area that I or another DevOps expert can personally inject some energy into. If I’m visible, if I talk to people, if I can build a compelling storyline – we make rapid progress. Without it, we don’t. It’s almost like starting a fire – you can’t just crumple up some newspaper, dump some kindling on it, light a match and walk away. You’ve got to tend it, constantly add material or blow on it to get something going.

We’re spread very thin; energy and time are limited, and without injecting energy things just don’t happen. That’s a very common story – it’s not that we’re lazy, or bad, or stupid – we work very hard, but there’s so much work to be done we can’t spare the cycles to look at how we’re going about things. Sometimes, you need an outside perspective to provide that new idea, or show a different way.

Lead By Listening

One of the base principles of DevOps is to find your area of pain and devote cycles into automating it. That removes a lot of waste, human defects, errors when you’re running a deployment. But that doesn’t resonate when I work with a team that’s new to DevOps. I don’t walk in there with a stone tablet of commandments, “here’s what you should do to do DevOps”. That’s a huge turn-off.

Instead, I start by listening. I talk to each team ask them how they go about their work, what they do, how they do it. Once we find out how things are working, we can also identify some problems – then we can come in and we can talk about how automation can address that problem in a way that’s specific to that team, how DevOps can make their world better. They see a better future and they can go after it.

Tools as an Incentive

I just said the tool isn’t the problem, but that doesn’t mean it’s not a critical part of the solution. I’m a techie at heart and I like a shiny new tool just as much as the next person. You can use tools as incentives to get new changes rolling. It’s a tough sell to walk into a meeting and pitch unit testing as a cure to quality issues if they take a long time to write. But if we talk about using Visual Studio Enterprise and how it makes unit tests simple and it’s able to run them real time, now it becomes easier to do unit testing than to test the old way. If we can show how these tools can shrink testing to be an afterthought instead of a week, now we have your attention!

About a year ago, our CIO set a mandate for the entire organization to excel at both DevOps and Agile. But the architecture wasn’t defined, no tools were specified. Which is terrific – DevOps and Agile is just a way of improving what we can do for the business. We now see different teams having different tech stacks and some variation in the tools based on what their pain point is and what their customers are needing. As a rule, we encourage alignment where it makes sense around either a technology stack or with a common leader. That provides enough alignment that teams can learn from each other and yet look for better ways of solving their issues.

The rule is that each main group in IT should favor a toolchain, but should choose software architecture that fits their business needs. In one area, for example, the focus is on getting changes into production as fast as possible. This is the cutting edge of the blade, so automation and fast turnaround cycles are everything. For them, microservices are a terrific option and the way that their development happens – it fits the business outcomes they want.

Do You Need the Cloud?

They’ll tell you that DevOps means the cloud; you can’t do it without rapid provisioning which means scalable architecture and massive cloud-based datacenters. But we’re almost 100% on-prem. For us, we need to keep our software, especially R&D, privately hosted. That hasn’t slowed us down much. It would certainly be more convenient to have cloud-based data centers and rapid provisioning, but it’s not required by any means.

Metrics We Care About

We focus on two things – lead time (or cycle time in the industry) and production impact. We want to know the impact in terms of lost opportunity – when the fab slows down or stops because of a change or problem. That resonates very well with management, it’s something everyone can understand.

But I tell people to be careful about metrics. It’s easy to fall in love with a metric and push it to the point of absurdity! I’ve don’t this several times. We’ve dabbled in tracking defects, bug counts, code coverage, volume of unit testing, number of regression tests – and all of them have a dark side or poor behavior that is encouraged. Just for example, let’s say we are tracking and displaying volume of regression tests. Suddenly, rather than creating a single test that makes sense, you start to see tests getting chopped up into dozens of tests with one step in them so the team can hit a volume metric. With bug counts – developers can classify them as misunderstood requirement rather than admitting something was an actual bug. When we went after code coverage, one developer wrote a unit test that would bring the entire module of code under test and ran that as one gigantic block to hit their numbers.

We’ve decided to keep it simple – we’re only going to track these 2 things – cycle time and production impact – and the teams can talk individually in their retrospectives about how good or bad their quality really is. The team level is also where we can make the most impact on quality.

I’ve learned a lot about metrics over the years from Bob Lewis’ IS Survivor columns. Chief among those lessons is to be very, very careful about the conversation you have with every metric. You should determine what success looks like, and then generate a metric that gives you a view of how your team is working. All subsequent conversations should be around “if we’re being successful” and not “are we achieving the metric.” The worst thing that can happen is that I got what I measured.

PMO Resistance

Sometimes we see some resistance from the BSA/PM layer. That’s usually because we’re leading with our left foot – the right way is to talk about outcomes. What if we could get code out the door faster, with a happier team, with less time testing, with less bugs? When we lead with the desired outcome, that middle layer doesn’t resist, because we’re proposing changes that will make their lives easier.

I can’t stress this enough – focus on the business outcomes you’re looking for and eliminate everything else. Only pursue a change if the outcome fits one of those business needs.

When we started this quality initiative, initially our release cycle averaged – I wish I was exaggerating – about 300 days. We would invest a huge amount of testing at every site before we would deploy. Today, we have teams with cycle times under 10 days. But that speed couldn’t happen unless our quality had gone up. We had to beef up our communication loop with the fab so if there was a problem we can stop it before it gets replicated.

The Role of Communication

You can’t overstate credibility. As we create less and less impact with changes we deploy, our relationship with our customers in the business gets better and better. Just for example, three years ago we had just gone through a disastrous communication tool patch that had grounded an entire site for hours. We worked through the problems internally and then I came to a plant IT director a year later and told them that we thought the quality issues were taken care of and enlisted their help.

Our next deployment required 5 minutes of downtime and had limited sporadic impact. And that’s been the last real impact we’ve had during software deployment for this tool in almost 3 years – now our deployments are automated and invisible to our users. Slowly building up that credibility and a good reputation for caring about the people you’re impacting downstream has been a big part of our effort.

Cross-Functional Teams

It’s commonly accepted that for DevOps to work you must be cross-functional. We are like many other companies in that we use a Shared Services model – we have several agile teams that include development, QA roles, an infrastructure team, and Operations which handles trouble tickets from the sites – each with their own leader. This might be a pain point in many companies, but for us it’s just how we work. We’ve learned to collaborate and share the pain so that we’re not throwing work over the fence. It’s not always perfect, but it’s very workable.

For example, in my area every week we have a recap meeting which Ops leads, where they talk about what’s been happening in production and work out solutions with the dev managers in the room. In this way the teams work together and feel each other’s pain. We’re being successful and we haven’t had to break up the company into fully cross-functional groups.

Purists might object to this – we haven’t combined Development and Operations, so can we really say that we are “doing DevOps”? If it would help us drive better business outcomes, that org reshuffling would have happened. But for us, since the focus is on business outcomes, not on who we report to, our collaboration cross team is good and getting better every day. We’re all talking the same language, and we didn’t have to reshuffle. We’re all one team. The point is to focus on the business outcomes and if you need to reorg, it will be apparent when teams talk about their pain points.

If It Comes Easy, It Doesn’t Stick

Circling back to energy – sometimes I sit in my office and wish that culture was easier to change. It’d be so great if there was a single metric we could align on, or a magical technique where I could flip a switch and everyone would get it and catch fire with enthusiasm. Unfortunately, that silver bullet doesn’t exist.

Sometimes I listen to Dave Ramsey on my way in to work – he talks about changing the family tree and getting out of debt. Something he said though resonated with me – “If it comes easy, it doesn’t stick.” If DevOps came easy for us, it wouldn’t really have the impact on our organization that we need. There’s a lot of effort, thought, suffering – pain, really – to get any kind of outcome that’s worth having.

As long as you focus on the outcome, I believe DevOps is a fantastic thing for just about any organization. But, if you view it as a recipe that you need to follow, or a checklist – you’re on the wrong track already, because you’re not thinking about outcomes. If you build from an outcome that will help your business and think backwards to the best way of reaching that outcome – then DevOps is almost guaranteed to work.