I’ve done a few articles on Application Insights – (older ones here and here) – but none yet on Operations Management Suite, because 1) I’m not IT in my background and 2) I’m busy leave me the heck alone! (I kid, we’ll get to it eventually.) These have all been more how-to – and admittedly it’s so easy I hesitate to call it that – versus why to. But last night on a plane coming back from Kansas City, I was mulling this over. (It helps that I had the excellent if somewhat clunky “The Art of Monitoring” on my Kindle.)

Monitoring has long been the secret sauce of DevOps. How else do we get feedback on our priorities, and actual metrics – not guesses – on which features are in use? What’s often overlooked though is that it can actually help you fight back against the wrong kind of change management – one that increases your bureaucratic workload and actually makes your build riskier and harder to fix. How is that possible?

The Blame Game

Let’s start with some basic negative cycles we’ve all seen when there’s very visible production outages. When bad things happen in production, we immediately start seeing the oddest thing happen – the SDLC process starts to dissolve into this negative cycle of blame and recriminations.

Take the example of Knight Capital in 2012. My good friend Donovan Brown often cites this as a warning example. Here, one messy 15 minute deployment led to 440M loss. In the wake of a disaster like this, John Allspaw noted that there are two counterfactual narratives that spring up:

- Blame change control. “Hey, better CM practices could have prevented this!”

- Blame testing – “If we had better QA, we at least could have taken steps to detect it faster and recover!”

It’s hard to argue with either of these. And it’s true, the RIGHT kind of change controls do need to be implemented. But by clenching like this, as Gene Kim has noted in The DevOps Handbook, “in environments with low-trust, command and control cultures, the outcomes of their change control and testing countermeasures end up hurting more than they help. Builds become bigger, less frequent and more risky.” Why is this?

This is because the devs/QA team begins implementing increasingly more clunky testing suites that take longer to execute, or writing unit tests that frequently don’t catch errors in the user experience. In a pinch, the QA team begins adding a significant amount of manual smoketesting versus automated tests. Management begins imposing long and mandatory change control boards every week to approve releases and go over introduced defects from the previous week(s) – I’ve seen these groups grow into the 100’s, most of whom are very far removed from the application. More controls, remote gatekeepers and a manual approval process leads to increased batch sizes and deployment lead times – which reduces our chances of a successful deployment for both dev and Ops. Our feedback loop – the times stretch out, reducing its value. A key finding of several studies is that high performing orgs relied more on peer review and less on external approval of changes. The more orgs rely on change approval, the worse their IT performance in both stability (MTTR and change fail rate) and throughput (deployment lead times and frequency).

This is often where I tell the story of my dad and I, trying to cut down a few trees for my uncle that had fallen across a local creek in NW Washington in a storm. The river had risen several feet and we city boys were standing below the dam formed by these large tree trunks. I remember looking up at the water swelling and pushing against the trees, as we were cutting into them, and thinking what those several feet of water would do once released. It didn’t take a lot of imagination to picture the outcome – two idiots being swept out to the Pacific Ocean – but the problem was my uncle was standing a few dozen feet away, hand on his hips, watching us with his lips tight in a disapproving line. I told my father, “Dad, I don’t care what it takes, but we need to find a way of breaking that chainsaw!” That’s the kind of backlog that can form that can choke your release cycle, reducing flow and increasing build sizes and risk. (And, by accidentally dunking the chainsaw, we were able to successfully kill the project and earn the lasting contempt of my uncle – “I want to thank you boys – it’s been a long time since I’ve been to the circus!”)

Telemetry To The Rescue

The main issue above is that this overreactive organization was trying to prevent errors and bugs from happening. Sometimes, they even call their recap (punitive!) meetings “Zero Defect Meetings” – as if such a kind of operational perfection is attainable! In contrast, DevOps savvy companies don’t try to focus on MTBF – reducing their failure count. They know outages are going to happen. Instead, they try to treat each failure as an opportunity – what test was missing that could have caught this, what gap in our processes can address this next time? Especially they focus on improving their REACTION time – improving their time to recovery, MTTR (Mean Time to Recover). Testing and automated instrumentation – that famous passage about wanting “cattle not pets”, i.e. blowing away and recreating environments at whim – forms the heart of their adaptive, flexible response strategy.

Puppet Labs – in their excellent 2014 “State of DevOps” report – mentioned that organizations that want to improve on their reaction time (MTTR) benefit the most – and it’s not even close, by an order of magnitude – from two technical tools/approaches:

- Use of version control for all production artifacts – When an error is identified in production, you can quickly either redeploy the last good state or fix the problem and roll forward, reducing the time to recover.

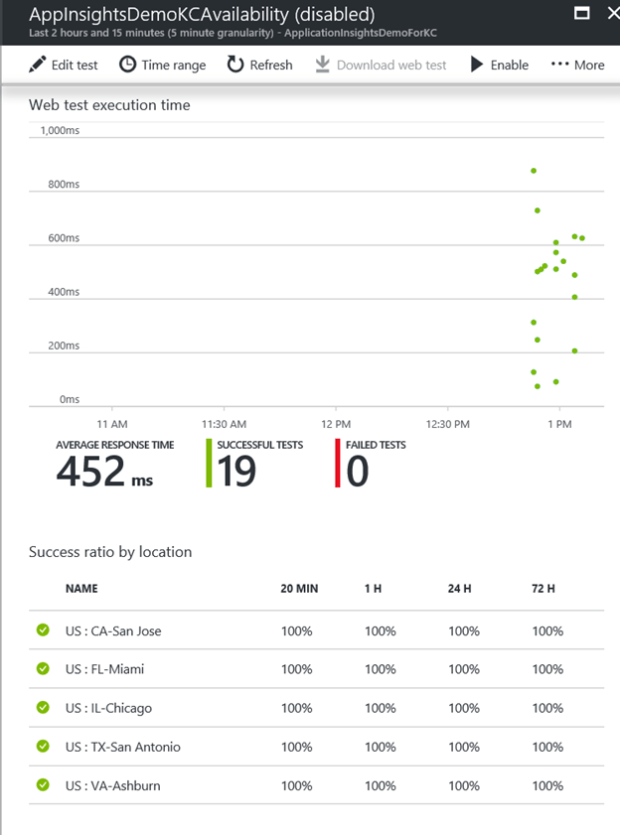

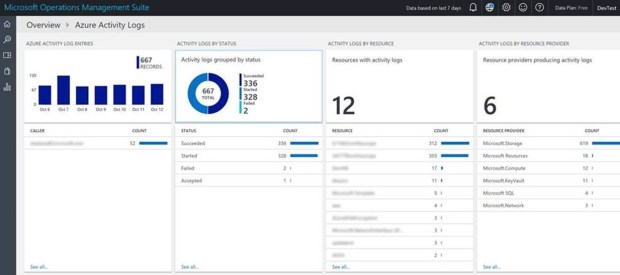

- Monitoring system and application health – Logging and monitoring systems make it easy to detect failures and identify the events that contributed to them. Proactive monitoring of system health based on threshold and rate-of-change warnings enables us to preemptively detect and mitigate problems.

We’re going to talk about monitoring above. How can monitoring help turn the tide for us so we don’t overreact because of a production outage?

So above we can see a few fixes that can transform that reactive, vicious cycle into a responsive but measured virtuous cycle that addresses the core problems you’re seeing in PROD. Some are nontechnical or more process related than anything else – and note that fixing the issue starts with purity of code – as early in the process as possible:

- Adding or strengthening production telemetry (we can confirm if a fix works – and autodetect next time)

- Devs begin pushing code to prod (I can quickly see what’s broken and make decisions to rollback vs patch). Note on this, a rollback – going to a previous version – is almost always easier and less risky. But sometimes fixing forward and rolling out a change using your deployment process is the best way forward.)

- Peer reviews. This includes not just code deployments but ops/IT changes to environments! (remember the Phoenix project, 80% of our issues caused by unauthorized changes, often by IT to environments, 80% of our time stuck figuring out what in this soup of changes caused the issue – before we even lift a finger to resolve anything! I’ll write more about how to do a productive peer review – expecially pair programming, which is really a code review on programming – later.)

- Better automated testing (again, more on this later. Look at Jez Humble’s excellent Continuous Delivery or Agile Testing for more on this.

- Batch sizes get smaller. The secret to smooth and continuous flow is making small, frequent changes.

A key driver here though is information radiators- a term that actually comes from Toyota’s Lean principles. This creates a feedback loop, which broadcasts back issues as quickly as possible, radiating information out on how things are going.

Etsy – just to take one company as an example – takes monitoring so seriously that some of their architects have been quoted as saying their monitoring systems need to be more available and scalable than the systems they’re monitoring. One of their engineers was quoted as saying, “If Engineering at Etsy has a religion, it’s the Church of Graphs. If it moves, we track it. Sometimes we’ll draw a graph of something that isn’t moving yet, just in case it decides to make a run for it. Tracking everything is the key to moving fast, but the only way to do it is to make tracking anything easy. We enable engineers to track what they need to track, at the drop of a hat, without requiring time-sucking configuration changes or complicated processes.”

Another great thinker in the DevOps space, Ernest Mueller, has said – “One of the first actions I take when starting in an organization is to use information radiators to communicate issues and detail the changes we are making. This is usually extremely well received by our business units, who were often left in the dark before. And for Deployment and Operations groups who must work together to deliver a service to others, we need that constant communication, information and feedback.

I know I found that being true in my career. I discovered this fairly early on in my adoption of Agile with some sportswear companies here in the Oregon region. I worked for some very personality-driven orgs with highly charged, negative dynamics between teams. As I adopted Agile, which meant broadcasting honest retrospectives – including my screw-ups and failure to meet sprint goals – I expected a Donkey Kong type response and falling hammers. The most shocking thing happened though – the more brutally honest and upfront I was on what had gone wrong, I found myself having a better relationship with the business and my IT partners. And, mistakes we made on the team were owned up to – and they typically didn’t repeat, not without the group holding the culprit (including me) responsible. That kind of “government in the sunshine” type transparency and candor was the biggest single turning point of our Agile transformation.

It’s been said, rightly, that every lie we tell ourselves comes with a payoff and a price.

I believe that very much to be the case. For developers or IT, we’ve been very used to thinking we are AWESOME and WONDERFUL and the OTHER GUYS are cowboys/bureaucratic tools and are EVIL. Maybe that story – which has the short term payoff of making us feel virtuous – comes with a heavy price, of limiting our success in rolling out easy to manage and maintain applications and delivering business value faster. By using instrumentation and telemetry, we demonstrate that we are not lying to ourselves or to our customers/the business. And suddenly a lot of those highly charged, politically sensitive meetings you find yourself in lose a lot of their subjectivity and poison – the focus is on improving numbers versus the negative punish/blame scenario.

In Closing

- Like testing, instrumentation and monitoring seems to be a bolt on or an afterthought in every project. That’s a huge mistake. Make instrumentation and metrics the backbone of your DevOps movement, as it’s the only thing that will tell you if you’re making specific progress and earn you credibility in the eyes of the business.

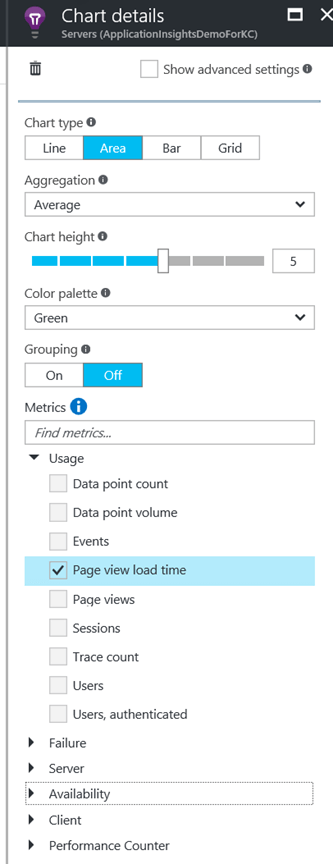

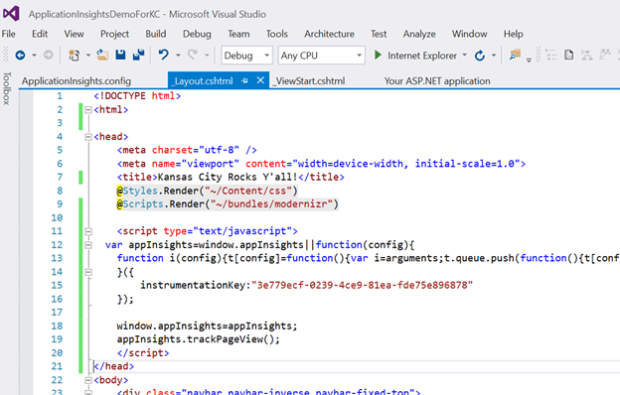

- Don’t let your developers tell you that it’s too hard or have it be an afterthought. It takes just a few minutes to make your release and application availability metrics available to all.

- And if your telemetry system is difficult to implement or doesn’t collect the metrics you need, think about switching. Remember the Etsy lesson – making it easy and quick is the way to go. (which is why I really like App Insights!)