The following content is shared from an interview with Jon Cwiak, Enterprise Cloud Platform Architect at Humana. What we loved about talking with Jon was his candor – he’s very honest and upfront that the story of Humana’s adoption of DevOps has not always been smooth, and the struggles and challenges they’re facing. Along the way we learned some eye-opening insights:

- Having a DevOps team isn’t necessarily a bad thing

- How can you break down walls and change very traditional mindsets or siloed groups?

- How two metrics alone can tell you how your organization’s health

- The Humana story as a practical roadmap, from version control to config management to feature toggles and microservices

- The power of laziness as a positive career trait!

We loved our talk with Jon and wanted to share his thoughts with the community. Note – these and other interviews and case studies will form the backbone of our upcoming book “Achieving DevOps” from Apress, due out in late 2018. Please contact me if you’d like an advance copy!

My name is Jon Cwiak – I’m an enterprise software architect on our enterprise DevOps enablement team at Humana, a large health insurance company based out of Kentucky. We are in the midst of a translation from the traditional insurance business into what amounts to a software company specializing in wellness and population health.

Our main function is to promote the right practices among our engineering teams. So I spend a big part of each week reinforcing to groups the need for hygiene – that old cliché about going slow to go fast. Things like branching strategy, version control, configuration management, dependency management – those things aren’t sexy but we’ve got to get it right.

Some of our teams though have been doing work in a particular way for 15 years; it’s extraordinarily hard to change these indoctrinated patterns. What we are finding is, we succeed if we show we are adding value. Even with these long-standing teams, once they see how a stable release pipeline can eliminate so much repetitive work from their lives, we begin to make some progress.

We are a little different in that there was no trumpet call of “doing DevOps” from on high – instead it was crowdsourced. Over the past 5 years, different teams in the org have independently found a need to deliver products and services to the org at a faster cadence. It’s been said that software is about two things – building the right thing and building the thing right. My group’s mission is all about that second part – we provide the framework, all the tools, platforms, architectural patterns and guidance on how to deliver cheaper, faster, smarter.

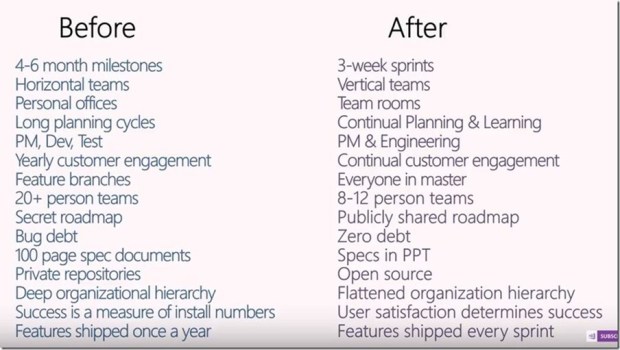

The big picture that’s changed for us as a company is the realization that doing this big-bang, waterfall, shipping everything in 9 months or more mega-events just doesn’t cut it anymore. We used to do those vast releases – a huge flow of bits like water, we called it a tsunami release. Well just like with a real tsunami there’s a wave of devastation after the delivery of these large platforms all at once that can take months of cleanup. We’ve changed from tsunami thinking to ripples with much faster, more frequent releases.

When the team first started up in 2012, the first thing we noticed was that everything was manual. And I mean everything – change requests, integration activity, testing. There was lots of handoffs, lots of Conway’s law at work.

So we started with the basics. For us, that was getting version control right – starting with basic hygiene practices, doing things in ways that decouple you from the way in which the software is being delivered. Just as an example, we used to label releases based on the year, quarter and month where a release was targeted for. So if suddenly a feature wasn’t needed – just complete integration hell. Lots of merges, lots of drama as we were backing things out. So we moved toward semantic versioning, where products are versioned regardless of when they’re delivered. Since this involved dozens of products and a lot of reorganization, getting version control right took the better part of 6 months for us. But it absolutely was the ground level for us being able to go fast.

Next up was fixing the way the devs worked. We had absolutely no confidence in the build process because it was xcopy manual deployments – so there was no visibility, no accountability, and no traceability. This worked great for the developers, but terrible for everyone else having to struggle with “it works on my machine!” So, continuous integration was the next rung on the ladder, and we started with a real enterprise build server. Getting to a common build system was enormously painful for us; don’t kid yourself that it’s easy. It exposed, application by application, all the gaps in our version control, a lot of hidden work we had to race to keep ahead of. But once the smoke cleared, we’d eliminated an entire category of work. Now, version control was the source of fact, the build server artifacts were reliable and complete. Finally we had a repeatable build system that we could trust.

The third rung of the ladder was configuration management. It took some bold steps to get our infrastructure under control. Each application had its own unique and beautiful configuration, and no two environments were alike – dev, QA, test, production, they were all different. Trying to figure out where these artifacts were and what the proper source of truth was required a lot of weekends playing “Where’s Waldo”! Introducing practices like configuration transforms gave us confidence we could deploy repeatedly and get the same behavior and it really helped us enforce some consistency. The movement toward a standardized infrastructure – no snowflakes, everything the same, infrastructure as code – has been a key enabler for fighting the config drift monster.

The data layer has been one of the later pieces to the puzzle for us. With our move to the cloud, we can’t wait for the thumb of approval from a DBA working apart from the team. So teams are putting their database under version control, building and generating deployable packages through DACPACs or ReadyRoll, and the data layer just becomes another part of the release pipeline. I think over time that traditional role of the DBA will change and we’ll see each team having a data steward and possibly a database developer; it’s still a specialized need and we need to know when a data type change will cause performance issues for example, but the skillset itself will get federated out.

Using feature toggles changes the way we view change management. We’ve always viewed delivery as the release of something. Now we can say, the deployment and the release are two different activities. Just because I deploy something doesn’t mean it has to be turned on. We used to view releases as a change, which means we needed to manage them as a risk. Feature toggles flips the switch on this where we say, deployments can happen early and often and releases can happen at a different cadence that we can control, safely. What a game-changer that is!

COTS products and DevOps totally go together. Think about it from an ERP perspective – where you need to deliver customizations to an ERP system, or Salesforce.com or whatever BI platform you’re using. The problem is, these systems weren’t designed in most cases to be delivered in an agile fashion. These are all big bang releases, with lots of drama, where any kind of meaningful customization is near taboo because it’ll break your next release. To bridge this gap, we tell people not to change but add – add your capabilities and customizations as a service, and then invoke thru a middleware platform. So you don’t change something that exists, you add new capabilities and point to it.

Gartner’s concept of bimodal IT I struggle with, quite frankly. It’s true you can’t have a one size fits all risk management strategy – you don’t want a lightweight website going through the long review period you might need with a legacy mainframe system of record for example. But the whole concept that you have this bifurcated path of one team moving at this fast pace, and another core system at this glacial pace – that’s just a copout I think, an excuse to avoid the modern expectations of platform delivery.

We do struggle with long lived feature branches. It’s a recurring pain point for us, we call it the integration credit card that teams charge to and it inevitably leads to drama at release time, some really long weekends. In a lot of cases the team knows this is bad practice and they definitely want to avoid it, but because of cross dependencies we have these long-lived branches. The other issue is contention, which usually is an architecture issue. We’re moving towards a one repo, one build pipeline and decomposing software down to its constituent parts to try to reduce this, but decoupling these artifacts is not an overnight kind of thing.

The big blocker for most organizations seems to be testing. Developers want to move at speed, but the way we test – usually manually – and our lack of investment in automated unit tests creates these long test cycles which in turn spawns these long-lived release branches. The obvious antidote are feature toggles to decouple deployment from delivery.

I gave a talk a few years back called “King Tut Testing” where we used Mike Cohn’s testing pyramid to talk about where we should be investing in our testing. We are still in the process of inverting that pyramid – moving away from integration testing and lessening functional testing, and fattening up that unit testing layer. A big part of the journey for us is designing architectures so that they are inherently testable, mockable. I’m more interested in test driven design than I am test driven development personally, because it forces me to think in terms of – how am I going to test this? What are my dependencies, how can I fake or mock them so that the software is verifiable. The carrot I use in talking about this shift and convincing them to invest in unit testing is, not only is this your safety net, it’s a living, breathing definition of what the software does. So for example, when you get a new person on the team, instead of weeks of manual onboarding, you use the working test harness to introduce them to how the software behaves and give them a comfort level in making modifications safely.

The books don’t stress enough how difficult this is. There’s just not the ROI to support creating a fully functional set of tests with a brownfield software package in most cases. So you start with asking, where does this hurt most? – using telemetry or tools like SonarQube. And then you invest in slowing down, then stopping the bleeding.

Operations support in many organizations tends to be more about resource utilization and cost accounting – how do I best utilize this support person so he’s 100% busy? And we have ticketing systems that create a constant source of work for Operations and activity. The problem with this siloed thinking is that the goal is no longer developing the best software possible and providing useful feedback, it’s now closing a ticket as fast as possible. We’re shifting that model with our move to microservices to teams that own the product and are responsible for maintaining and supporting it end to end.

Lots of vendors are trying to sell DevOps In A Box – buy this product, magic will happen. But they don’t like to talk about all the unsexy things that need to be done to make DevOps successful – four years to clean up version control, for example. It’s kind of a land grab right now with tooling – some of those tools are great in unicorn space but not so well with teams that were using long lived feature branches.

Every year we do an internal DevOps Day, and that’s been so great for us in spreading enthusiasm. I highly recommend it. The subject of the definition of DevOps inevitably comes up. We like Donovan Brown’s definition and that’s our standard – one of the things I will add is, DevOps is an emergent characteristic. It’s not something you buy, not something you do. It’s something that emerges from a team when you are doing all the right things behind the scenes, and these practices all work together and support each other.

There’s lots of metrics to choose from, but two metrics stand out – and they’re not new or shocking. Lead time and cycle time. Those two are the standard we always fall back on, and the only way we can tell if we’re really making progress. They won’t tell us where we have constraints, but it does tell us which parts of the org are having problems. We’re going after those with every fiber of our effort. There’s other line of sight metrics, but those two are dominant in determining how things are going.

We do value stream analysis and map out our cycle time, our wait time, and handoffs. It’s an incredibly useful tool in terms of being a bucket of cold water right to the face – it exposes the ridiculous amount of effort being wasted in doing things manually. That exercise has been critical in helping prove why we need to change the way we do things. Its specific, quantitative – people see the numbers and get immediately why waiting two weeks for someone to push a button is unacceptable. Until they see the numbers, it always seems to be emotional.

A consistent definition of done – well, we’re getting there. Giving people 300 page binders, or a checklist, or templated tasks so developers have to check boxes – we’ve tried them all, and they’re just not sustainable. The model that seems to work is where the team is self policing, where a continuous review is happening of what other people on the team are doing. That kind of group accountability is so much better than any checklist. You have to be careful though – it’s successful if the culture supports these reviews as a learning opportunity, a public speaking opportunity, a chance to show and tell. In the wrong culture, peer reviews or code demos becomes a kind of group beat-down where we are criticizing and nitpicking other people’s investment.

A DevOps team isn’t an antipattern like people say. Centralizing the work is not scalable, that is definitely an antipattern. But I love the mission our team has, enabling other groups to go faster. It’s kind of like being a consulting team – architectural guidance and consulting, practices. It’s incredibly rewarding to help foster this growing culture within our company, we are seeing this kind of organic center of excellence spring up.

What I like to tell people is, be like the best developers out there, and be incredibly selfish and lazy. If you’re selfish, you invest in yourself – improving your skillset, in the things that will give you a long-term advantage. If you’re lazy, you don’t want to work harder than you have to. So you automate things to save yourself time. Learning and automation are two very nice side effects of being lazy and selfish, and it’s a great survival trait!